SSIS – Part 2

SSIS Interview Questions and Answers for Experienced and Fresher’s

Here we are publishing series of posts on SSIS Interview questions and answers Part 2 for experienced and freshers . Below is the series 2.

Q. What is a breakpoint in SSIS?

Ans:

A breakpoint is a stopping point in the code. The breakpoint can give the Developer\DBA an opportunity to review the status of the data, variables and the overall status of the SSIS package.

Breakpoints are setup in BIDS. In BIDS, navigate to the control flow interface. Right click on the object where you want to set the breakpoint and select the ‘Edit Breakpoints…’ option.

Q. Can you name 5 or more of the native SSIS connection managers?

Ans:

- OLEDB connection – Used to connect to any data source requiring an OLEDB connection (i.e., SQL Server)

- Flat file connection – Used to make a connection to a single file in the File System. Required for reading information from a File System flat file

- ADO.Net connection – Uses the .Net Provider to make a connection to SQL Server 2005 or other connection exposed through managed code (like C#) in a custom task

- Analysis Services connection – Used to make a connection to an Analysis Services database or project. Required for the Analysis Services DDL Task and Analysis Services Processing Task

- File connection – Used to reference a file or folder. The options are to either use or create a file or folder

- Excel

- FTP

- HTTP

- MSMQ

- SMO

- SMTP

- SQL Mobile

- WMI

Q. How do you eliminate quotes from being uploaded from a flat file to SQL Server?

Ans:

In the SSIS package on the Flat File Connection Manager Editor, enter quotes into the Text qualifier field then preview the data to ensure the quotes are not included.

Q. Can you name 5 or more of the main SSIS tool box widgets and their functionality?

Ans:

- ActiveX Script Task

- Analysis Service Processing Task

- Analysis Services Execute DDL Task

- Backup Database Task

- Bulk Insert Task

- CDC Control Task

- Check Database Integrity Task

- Data Flow Task

- Data Mining Query Task

- Data Profiling Task

- Execute DTS 2000 Package Task – Till 2008

- Execute Package Task

- Execute Process Task

- Execute SQL Server Agent Job Task

- Execute SQL Task

- Execute T-SQL Statement Task

- Expression Task

- File System Task

- For Loop Container

- Foreach Loop Container

- FTP Task

- History Cleanup Task

- Maintenance Cleanup Task

- Message Queue Task

- Notify operator Task

- Rebuild Index Task

- Reorganize Index Task

- Script Task

- Send Mail Task

- Sequence Container

- Shrink Datbase Task

- Transfer Database Task

- Transfer error message

- Transfer Jobs Task

- Transfer Logins Task

- Transfer Mastor Stored Procedures Task

- Transfer SQL Server Object Task

- Update Ststistics Task

- Web Service Task

- WMI Datareader Task

- WMI Event Watcher Task

- XML Task

Q. Can you explain one approach to deploy an SSIS package?

Ans:

- One option is to build a deployment manifest file in BIDS, then copy the directory to the applicable SQL Server then work through the steps of the package installation wizard

- A second option is using the dtutil utility to copy, paste, rename, delete an SSIS Package

- A third option is to login to SQL Server Integration Services via SQL Server Management Studio then navigate to the ‘Stored Packages’ folder then right click on the one of the children folders or an SSIS package to access the ‘Import Packages…’ or ‘Export Packages…’option.

- A fourth option in BIDS is to navigate to File | Save Copy of Package and complete the interface.

Q. Can you explain how to setup a checkpoint file in SSIS?

Ans:

The following items need to be configured on the properties tab for SSIS package:

CheckpointFileName – Specify the full path to the Checkpoint file that the package uses to save the value of package variables and log completed tasks. Rather than using a hard-coded path, it’s a good idea to use an expression that concatenates a path defined in a package variable and the package name.

CheckpointUsage – Determines if/how checkpoints are used. Choose from these options: Never (default), If Exists, or Always. Never indicates that you are not using Checkpoints. “If Exists” is the typical setting and implements the restart at the point of failure behavior. If a Checkpoint file is found it is used to restore package variable values and restart at the point of failure. If a Checkpoint file is not found the package starts execution with the first task. The Always choice raises an error if the Checkpoint file does not exist.

SaveCheckpoints – Choose from these options: True or False (default). You must select True to implement the Checkpoint behavior.

Q. Would you recommend using “Check Points” in SSIS?

Ans:

As per my experience I could say “NO” as there are compatibility issues with various options hence using checkpoints may give unpredictable results. Checkpoints doesn’t work properly when a SSIS package contains

- Complex logic

- Iterations/Loops

- Transactions Enabled

- “Object” type variables

- Parallel execution

Checkpoints works fine when the package is having straightforward control flow with a single thread.

Q. Can you explain different options for dynamic configurations in SSIS?

Ans:

- Use an XML file

- Use custom variables

- Use a database per environment with the variables

- Use a centralized database with all variables

Q. How do you upgrade an SSIS Package?

Ans:

Depending on the complexity of the package, one or two techniques are typically used:

- Recode the package based on the functionality in SQL Server DTS.

- Use the Migrate DTS 2000 Package wizard in BIDS and then recode any portion of the package that is not accurate

Q. Can you name five of the Perfmon counters for SSIS and the value they provide?

Ans:

SQLServer: SSIS Service

SSIS Package Instances – Total number of simultaneous SSIS Packages running

SQLServer: SSIS Pipeline

BLOB bytes read – Total bytes read from binary large objects during the monitoring period.

BLOB bytes written – Total bytes written to binary large objects during the monitoring period.

BLOB files in use – Number of binary large objects files used during the data flow task during the monitoring period.

Buffer memory: The amount of physical or virtual memory used by the data flow task during the monitoring period.

Buffers in use – The number of buffers in use during the data flow task during the monitoring period.

Buffers spooled – The number of buffers written to disk during the data flow task during the monitoring period.

Flat buffer memory – The total number of blocks of memory in use by the data flow task during the monitoring period.

Flat buffers in use – The number of blocks of memory in use by the data flow task at a point in time.

Private buffer memory – The total amount of physical or virtual memory used by data transformation tasks in the data flow engine during the monitoring period.

Private buffers in use – The number of blocks of memory in use by the transformations in the data flow task at a point in time.

Rows read – Total number of input rows in use by the data flow task at a point in time.

Rows written – Total number of output rows in use by the data flow task at a point in time.

Q. How do you handle errors in ssis?

Ans:

When a data flow component applies a transformation to column data, extracts data from sources, or loads data into destinations, errors can occur. Errors frequently occur because of unexpected data values.

Errors typically fall into one the following categories:

Data conversion errors: occurs if a conversion results in loss of significant digits, the loss of insignificant digits, and the truncation of strings. Data conversion errors also occur if the requested conversion is not supported.

Expression evaluation errors: occurs if expressions that are evaluated at run time perform invalid operations or become syntactically incorrect because of missing or incorrect data values.

Lookup errors: occurs if a lookup operation fails to locate a match in the lookup table.

Many data flow components support error outputs, which let you control how the component handles row-level errors in both incoming and outgoing data. You specify how the component behaves when truncation or an error occurs by setting options on individual columns in the input or output.

Q. How do you do Logging in SSIS?

Ans:

- SSIS includes logging features that write log entries when run-time events occur and can also write custom messages.

- The Integration Services log providers can write log entries to text files, SQL Server Profiler, SQL Server, Windows Event Log, or XML files.

- Logs are associated with packages and are configured at the package level. Each task or container in a package can log information to any package log. The tasks and containers in a package can be enabled for logging even if the package itself is not.

To enable logging in a package:

- In Business Intelligence Development Studio, open the Integration Services project that contains the package you want.

- On the SSIS menu, click Logging.

- Select a log provider in the Provider type list, and then click Add.

Q. Demonstrate how you would suggest using configuration files in packages. Would you consider it a best practice to create a configuration file for each connection manager or one for the entire package?

Ans:

There should be a single configuration file for each connection manager in your packages that stores their connection string information. So if you have 6 connection managers then you have 6 config files. You can use the same config file across all your packages that use the same connections.

If you have a single config file that stores all your connection managers then all your packages must have contain the connection managers that are stored in that config file. This means you may have to put connection managers in your package that you don’t even need.

Q. Demonstrate how checkpoints work in a package.

Ans:

When checkpoints are enabled on a package if the package fails it will save the point at which the package fails. This way you can correct the problem then rerun from the point that it failed instead of rerunning the entire package. The obvious benefit to this is if you load a million record file just before the package fails you don’t have to load it again.

Q. Demonstrate how transactions work in a package.

Ans:

If transactions are enabled on your package and tasks then when the package fails it will rollback everything that occurred during the package. First make sure MSDTC (Microsoft Distributed Transaction Coordinator) is enabled in the Control Panel -> Administrative Tools -> Component Services. Transactions must be enabled not only on the package level but also on each task you want included as part of the transaction. To have the entire package in a transaction set Transaction Option at the package level to Required and each task to Supported.

Q. If you have a package that runs fine in Business Intelligence Development Studio (BIDS) but fails when running from a SQL Agent Job what would be your first guess on what the problem is?

Ans:

The account that runs SQL Agent Jobs likely doesn’t have the needed permissions for one of the connections in your package. Either elevate the account permissions or create a proxy account.

To create a proxy account you need to first create new credentials with the appropriate permissions. Next assign those credentials to a proxy account. When you run the job now you will select Run As the newly created proxy account.

Q. What techniques would you consider to add auditing to your packages? You’re required to log when a package fails and how many rows were extracted and loaded in your sources and destinations.

Ans:

I like to create a database that is designated for package auditing. Track row counts coming from a source and which actually make it to a destination. Row counts and package execution should be all in one location and then optionally report off that database.

There are also third party tools that can accomplish this for you (Pragmatic Works BI xPress).

Q. Demonstrate or whiteboard techniques you would use to for CDC (Change Data Capture)? Tell how you would write a package that loads data but first detects if the data already exists, exists but has changes, or is brand new data for a destination.

Ans:

For small amounts of data I may use the Slowly Changing Dimension. More often than not the data is too large to use in such a slow transform.

I prefer to do a lookup on the key of the target table and rows that don’t match are obviously new rows that can be inserted. If they do match it’s possible they are updates or duplicates. Determine this by using a conditional split comparing rows from the target to incoming rows. Send updates to a staging table that can then be updated in an Execute SQL Task.

Explain that putting updates in a staging table instead of updating using the OLE DB Command is much better for performance because the Execute SQL Task performs a bulk operation.

Q. Explain what breakpoints are and how you would use them.

Ans:

Breakpoints put pauses in your package. It’s a great tool for debugging a package because you can place a breakpoint on a task and it will pause the package based on execution events.

A reason in which I have used breakpoints is when I have a looping container and I want to see how my variables are changed by the loop. I would place a watch window on the package and type the variable name in. Set a break point on the container the stop after each iteration of the loop.

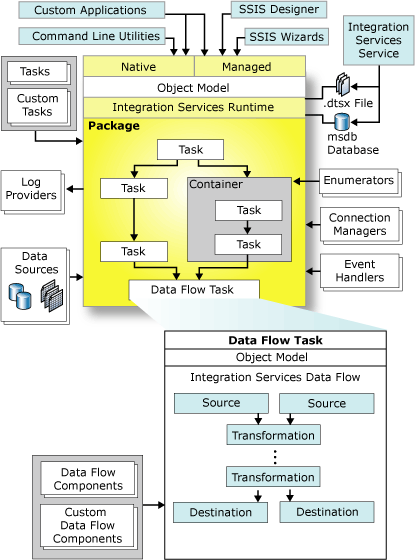

Q. What are the main components involved in SSIS?

Ans:

- SSIS is not improved version of DTS

- SSIS is completely redesigned and build from ground up using .NET code.

- SSIS is mainly divided into two parts.

- Data Transformation Pipeline (DTP) – Data Flow

- Data Transformation Runtime (DTR) – Control Flow

- In SQL SERVER 7 / 2000 the data flow is stronger than control flow but in SSIS both are in the same level

Q. What is the work of DTP Engine?

Ans:

- DTP consists of DTP Engine and DTP Object model

- DTP uses Data Adapters to connect source and destination

- DTP engine uses DTP Object Model which is nothing but an API.

- SSIS comes with adapters for SQL Server databases, XML, flat files, and other OLE DB–compliant data sources

- The job of the data adapters is to make connections to the data’s source and destination endpoints

- The job of the transformations is to move and optionally manipulate the data as it’s moved between the source and destination endpoints.

Q. How the DTR works in SSIS?

Ans:

- The DTR consists of the DTR engine and the DTR components.

- DTR components are objects that enable you to govern the execution of SSIS packages.

- The primary DTR components are packages, containers, and tasks.

- DTR engine stores package layout; runs packages; and provides debugging, logging, and event handling services.

- The DTR is accessed using the DTR object framework. The DTR run-time object framework is the API that supports the Integration Services Import/Export Wizard and the Integration Services Designer in addition to the command-line dtexec tool.

Q. Can you explain the SSIS Architecture?

Ans:

Runtime engine

The Integration Services runtime saves the layout of packages, runs packages, and provides support for logging, breakpoints, configuration, connections, and transactions.

API or object model

The Integration Services object model includes managed application programming interfaces (API) for creating custom components for use in packages, or custom applications that create, load, run, and manage packages. Developer can write custom applications or custom tasks or transformations by using any common language runtime (CLR) compliant language.

Integration Services service: It is a Windows service, monitors running SSIS packages and manages the storage of packages.

Data flow: It contains a data flow engine that manages the data flow components. There are 3 types of

Data Flow components – Source components (which extracts the data from a system), Transformation components (performs transformations, modifications onto the extracted data) and Load components (which simply performs the data loading tasks into the destination systems). Besides the available data flow components, we can write our own custom data flow components to accomplish any custom requirements.

References:

For more MSBI stuff please have a look at below references:

http://blogs.msdn.com/b/business-intelligence

https://sreenivasmsbi.wordpress.com

http://www.msbiguide.com

http://msbiravindranathreddy.blogspot.in

http://sqlschool.com/MSBI-Interview-Questions.html

https://www.katieandemil.com

http://www.venkateswarlu.co.in

http://www.sqlserverquest.com

http://www.msbiguide.com

Hi Uday….

windows 10 support for sql server 2012 installation….any prerequestis…

Shoukat,

Answer is Yes !

SQL 2012 should work fine on Windows 10. Make sure the correct dotnet framework is installed / enabled and also apply the latest SP for SQL Server 2012.

Happy Reading

http://www.udayarumilli.com

Hi UDAY,

In perfom counter question what is “during the monitoring period”

Can you elaborate it?

Dear Gopal,

Thanks for the visit and following.

When we need to check / monitor performance of a SSIS package we can do it in different ways and Performance Monitor is one of them. To monitor the performance we have to add the required performance counters, once ready with the package, execute the package and monitor the counter values in performance monitor and save the results for analysis. These values are captured during the monitoring period.

Happy Reading

The Team SQL

http://www.udayarumilli.com

Hi

Can u share any real time scenarios ? its helps me more

Dear Haneel,

Thanks for following.

We have shared few scenario based queries:

http://udayarumilli.com/ssis-interview-questions-and-answers-part-6/

Also we have been working on full list of questions and answers on SSIS and SSRS.

Thanks

SQL The One Team

http://www.udayarumilli.com

[…] SSIS – Part 2 […]